20251214

From today's featured article

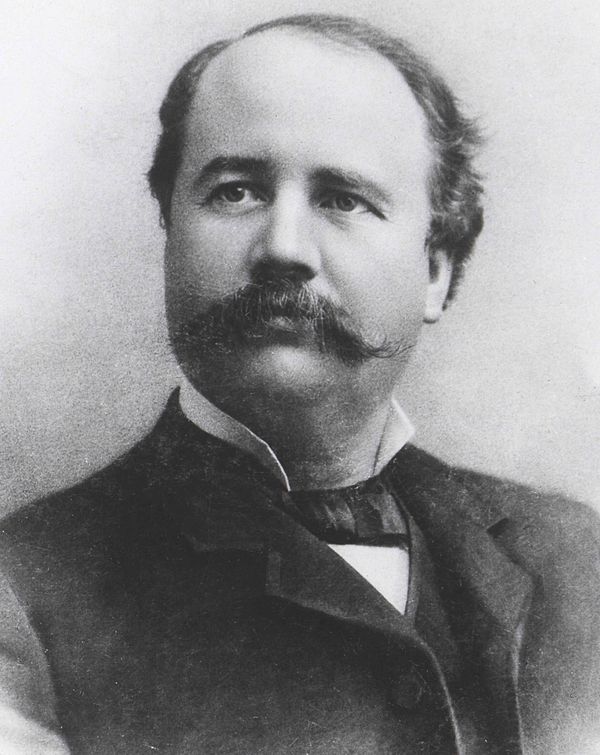

Commander Keen in Invasion of the Vorticons is a three-part, episodic, side-scrolling, platform video game by Ideas from the Deep and published by Apogee Software in 1990 for MS-DOS. Tom Hall (pictured) designed the game, John Carmack and John Romero programmed it, and artist Adrian Carmack assisted. The game came about when Carmack found a way to implement smooth side-scrolling on IBM-compatible PCs, and Scott Miller asked the team to develop an original game within three months. The game follows Keen as he runs, jumps, and shoots through various levels, retrieving the stolen parts of his spaceship, preventing an alien ship from destroying landmarks, and hunting down the aliens responsible. Released through shareware, sales were strong and it was lauded by reviewers for its graphical achievement and humorous style. The team continued as id Software to produce another four episodes of the Commander Keen series and followed up with Wolfenstein 3D and Doom. It has been re-released several times. (Full article...)

Did you know ...

- ... that Swedish general Lorens von der Linde (pictured) once shocked a British diplomat with his stories of King Charles X Gustav's German liaisons?

- ... that scholars have described the 1946 book The Failure of Technology as a precursor to the environmental movement?

- ... that Romanian far-right journalist Ilariu Dobridor died after being beaten and locked in his own home?

- ... that the Dutch-built and Turkish-operated Birinci İnönü-class submarines were used to discreetly develop German U-boats?

- ... that Dredge is a Lovecraftian horror and fishing video game?

- ... that the killing of an official by seven women was held up as an example of filial piety?

- ... that, when Mariame Clément directed Mozart's Così fan tutte, she froze a wedding tableau during the overture and restarted it in the finale?

- ... that Turkey is facing its worst drought in the last 50 years?

- ... that Nobuhiko Jimbo saved future Philippine president Manuel Roxas from execution during World War II, and Roxas did the same for Jimbo four years later?

In the news

- Clair Obscur: Expedition 33 wins Game of the Year at the Game Awards (producer and host Geoff Keighley pictured).

- In Australia, a ban on the use of certain social media platforms by under-16s comes into effect.

- In motorsport, Lando Norris wins the Formula One World Drivers' Championship.

- In a military offensive, the Southern Transitional Council seizes most of southern Yemen from the government.

On this day

December 14: Martyred Intellectuals Day in Bangladesh (1971), Monkey Day

- 835 – In the Sweet Dew incident, Emperor Wenzong of the Tang dynasty conspired to kill the powerful eunuchs of the Tang court, but the plot was foiled.

- 1925 – Wozzeck (poster pictured) by composer Alban Berg, described as the first atonal opera, premiered at the Berlin State Opera.

- 1948 – American physicists Thomas T. Goldsmith Jr. and Estle Ray Mann were awarded a patent for their cathode-ray tube amusement device, the first interactive electronic game.

- 1960 – Australian cricketer Ian Meckiff was run out on the last day of the first Test match between Australia and the West Indies, resulting in the first tied Test in cricket history.

- 1992 – War in Abkhazia: A helicopter carrying evacuees was shot down during the siege of Tkvarcheli, resulting in at least 52 deaths and catalysing more concerted Russian military intervention on behalf of Abkhazia.

- Niccolò Perotti (d. 1480)

- Giovanni Battista Cipriani (d. 1785)

- Michael Owen (b. 1979)

- Huang Zongying (d. 2020)

Today's featured picture

The leopard seal (Hydrurga leptonyx), also known as the sea leopard, is the second largest species of seal in the Antarctic, after the southern elephant seal. Its only natural predators are the killer whale and possibly the elephant seal. It feeds on a wide range of prey including cephalopods, other pinnipeds, krill, birds and fish. Together with the Ross seal, the crabeater seal and the Weddell seal, it is part of the tribe of Lobodontini seals. This photograph shows a leopard seal in the Antarctic Sound.

Photograph: Godot13