In the background: Putting threads to work

To take best advantage of background processing, multiple cores, and different core types, apps have to be designed to run efficiently in multiple threads that co-operate with one another and with macOS. This article explains the basics of how that works in Apple silicon Macs.

Single thread

Early apps, and many current command tools, run as simple sequences of instructions. Cast your mind back to days of learning to code in a first language like Basic, and you’d effectively write something likeBEGIN

handle any options passed in command

process data or files until all done

report any errors

END

There are still plenty of command tools that work like that, and run entirely in a single thread. One example is the tar command, which dates back to 1979, and its current version in macOS remains single-threaded. As a result it can’t benefit from any additional cores, and runs at essentially the same speed on base, Pro, Max and Ultra variants of an M chip family.

Multiple threads

Since apps gained windowed interfaces, rather than running from BEGIN to END and quitting, they’re primarily driven by the user. The app itself sits in an event loop waiting for you to tell it what to do through menu commands, tools or other controls. When you do that, the app hands that task over to the required code to handle, and returns to its main event loop. This is much better, as you see an app that remains responsive at all times, even though it may have other threads that are working frantically in the background on tasks you have given them.

We’ve now divided that simple linear code up into at least two parts: a foreground main thread that the user interacts with and farms work out to worker threads that run in the background. When run on a single core with multitasking, that ensures the main thread appears responsive at all times, and we could use that with a Stop command, as has been commonly implemented with ⌘-., to signal the worker thread to halt its processing.

Threads come into their own, though, with multiple CPU cores. If the worker code can be implemented so more than one thread can be processing a job at any time, then each core can run a separate thread, and complete that job more quickly. For example, instead of compressing an image one row of pixels at a time, the image could be divided up into rectangles, and each farmed out to be compressed by a separate thread. Alternatively, the compression process could be designed in stages, each of which runs in a separate thread, with image data fed through their pipeline.

Using multiple threads has its own limitations, and there are trade-offs as the number of threads increases, and more work has to be done moving data between threads and the cores they’re running on.

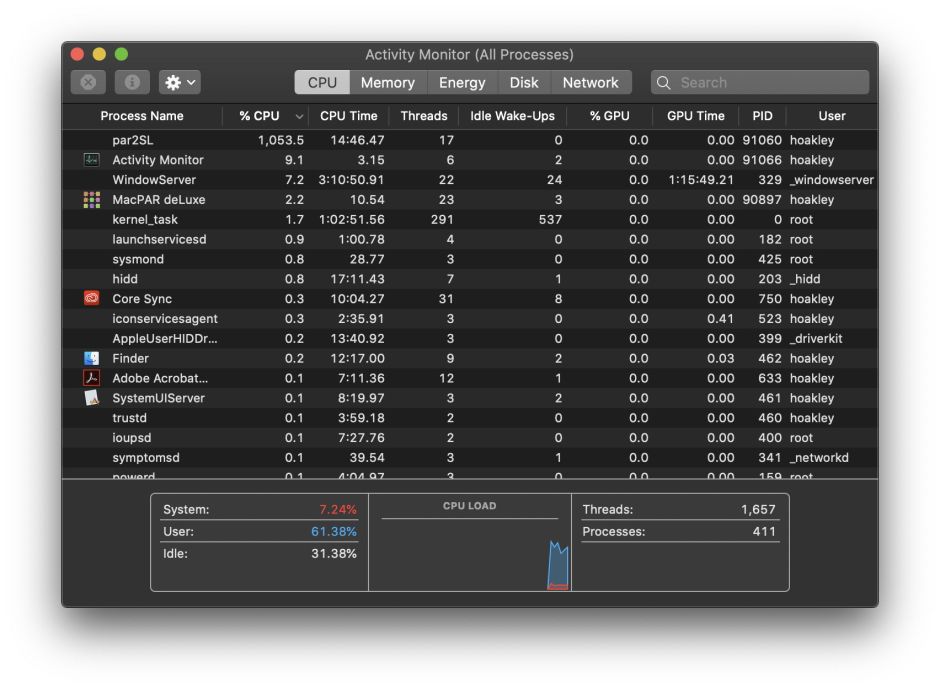

These can be seen in a little demonstration involving CPU-intensive floating point computations in a tight loop:

- running the computation in a single thread, on a single CPU core, normally processes 0.25 billion loops per second

- splitting the total work to be performed into 2 threads increases that to 0.48 billion/s

- in 10 threads, it rises to 2.1 billion/s

- in 20 threads, it reaches a peak of 4.2 billion/s

- in 50 threads, overhead has a negative impact on performance, and the rate falls to 3.3 billion/s.

Those are for the 10 P and 4 E cores in an M4 Pro, at high Quality of Service, so run preferentially on its P cores. In this case, picking the optimum number of threads could accelerate its performance by a factor of 16.8.

Core allocation

CPUs with one core type normally try to balance load across their cores, and coupled with a scheme for software to indicate the priority of its threads, aren’t as complex as those with two or more core types with contrasting performance characteristics. For its Alder Lake CPUs, Intel uses an elaborate Hardware Feedback Interface, marketed as Intel Thread Director, that works with operating systems such as Windows 11 and recent Linux kernels to allocate threads to cores.

With its long experience managing P and E cores in iPhones and iPads, Apple has chosen a scheme based on a Quality of Service (QoS) metric, together with software management of core cluster frequency. The developer API is simplified to offer a limited number of values for QoS:

- QoS 9 (binary 001001), named background and intended for threads performing maintenance, which don’t need to be run with any higher priority.

- QoS 17 (binary 010001), utility, for tasks the user doesn’t track actively.

- QoS 25 (binary 011001), userInitiated, for tasks that the user needs to complete to be able to use the app.

- QoS 33 (binary 100001), userInteractive, for user-interactive tasks, such as handling events and the app’s interface.

There’s also a ‘default’ value between 17 and 25, an unspecified value, and you might come across others used by macOS.

As a general rule, threads assigned a QoS of background and below will be allocated to run on E cores, while those of utility and above will be allocated to run on P cores when they’re available. Those higher QoS threads may also be run on E cores when P cores are already fully committed, but low QoS threads are seldom if ever promoted to run on P cores.

Internally, QoS uses a different, finer-grained metric, taking into account other factors, but those values aren’t exposed to the developer. QoS provided by the developer and set in their code is considered a request to guide macOS, and not an absolute determinant of which cores that code will be run on.

Cluster frequency

P and E cores are operated at a wide range of frequencies, that are determined by macOS according to more complex heuristics also involving QoS. In broad terms, when P cores are running threads they do so at frequencies close to their maximum, as are E cores when they’re running high QoS threads that should have been run on P cores. However, when E cores are only running low QoS threads, they do so at a frequency close to that of idle for maximum efficiency.

One significant exception to that are the two E cores in M1 Pro and M1 Max chips. To compensate for the fact that there are only two of them, when they’re running two or more threads of low QoS they have higher frequency. Frequency control in P cores in M4 Pro chips is also more complicated, as their frequency is progressively reduced as they’re loaded with more threads, presumably to constrain heat generated when running at those higher loads.

There are times when internal conditions, such as Low Power Mode, override a request for code to be run as userInteractive. You can experience that yourself if you try running apps that would normally be given P cores on a laptop with little remaining in its battery. All threads are then diverted to E cores to eke out the remaining battery life, and their cluster frequency is reduced to little more than idle.

Summary

- Developers determine the performance of their code according to how it’s divided into threads, and their QoS.

- QoS advises macOS of the performance expectation for each thread, and is a request not a requirement.

- macOS uses QoS and other factors to allocate threads to specific core types and clusters.

- macOS applies more complex heuristics to determine the frequency at which to run each cluster.

- System-wide policy such as Low Power Mode can override QoS.